One of amazing things about working with software engineering is the extensive number of opensource APIs available on the web for utilization. Anyone can leverage some complex tool which abstracting any complexity, without a deep knowledge in the area, and then gain some greater knowledge about that complex subject. That was my intention when I spent a couple of weekend exploring Keras. Keras is an highlevel Tensorflow API, as i’m not a data scientist Keras was was a perfect initiation tool to understand how AI/NLP, Neural Networks and CNNs works in practice.

The complete code can be found in the following github repo: TicketActivityClassifier.

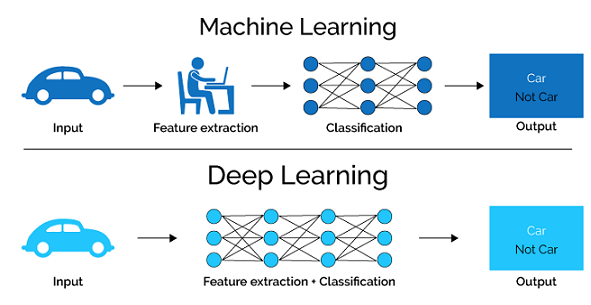

That’s another text classification model, using an Neural network Classification doing basic Machine Learning stuff like in the below workflow.

What is Keras?

Keras is an open-source high-level neural networks API written in Python. It was developed with a focus on enabling fast experimentation and has become one of the most popular AI libraries for building and training deep learning models.

Keras is built on top of other deep learning libraries such as TensorFlow, Theano, and CNTK, and provides a simplified interface for defining and training deep neural networks. It supports a wide range of network architectures, including convolutional neural networks (CNNs), recurrent neural networks (RNNs), and more.

One of the key features of Keras is its ease of use and flexibility. It allows users to quickly prototype and experiment with different network architectures and hyperparameters, and provides a simple and intuitive interface for defining and training models. It also includes a range of pre-trained models and tools for data preparation and augmentation.

Keras has a large and active community of users and developers, who contribute to its development and provide support through forums and other resources. It also supports integration with other popular AI libraries and tools, making it a powerful and versatile tool for deep learning research and application development.

Keras Ticket Classification Model

The purpose of this project is to create a model which is capable of indicating what is the Ticket Classification based on the Ticket Short Description and Category.

Get/Prepare dataset -> Word vectors and embedding layers -> Model creation -> Model Evaluation and persistense -> Dataset prediction

Get/Prepare dataset

First, using Pandas we read the CSV input file and convert it into a Pandas DataFrame.

99

100

101

102

103

104

105

|

def load_prepare_dataset(self, dataset, *args, **kwargs):

"""

loading dataset from csv and removal of null keys which are mandatory for training:

TicketShortDesc and Activity

"""

logging.info("Preparing to read csv dataset: " + str(dataset))

data = pd.read_csv(os.path.join(myapp_config.DATASETS_PATH, dataset), dtype=str)

|

Also using Pandas we remove null values which are mandatory inputs for model training using ‘.dropna()’ method. Using the ‘.head()’ method of the dataframe object we show the first five rows of the dataset.

106

107

108

109

110

111

112

|

drop_if_na = ["ShortDescription", "Activity"]

for i in range(0, len(drop_if_na)):

df.dropna(subset=[drop_if_na[i]], inplace=True)

logging.info(

df.head()

)

return df

|

Word vectors and embedding layers

We need to represent text with numeric values because it’s what machine learning expects, I use Keras Tokenizer to convert text into integer values. Tokenizer will assign a integer to the 10000 (input_words) most frequently used words.

99

100

101

102

103

104

105

106

107

|

X_train, X_test, Y_train, Y_test, Z_train, Z_test = train_test_split(

data["ShortDescription"], data["Category"], data["Activity"], test_size=0.15 )

# define Tokenizer with Vocab Sizes

vocab_size = 10000

tokenizer = Tokenizer(num_words=vocab_size)

tokenizer2 = Tokenizer(num_words=vocab_size)

tokenizer.fit_on_texts(X_train)

tokenizer2.fit_on_texts(Y_train)

|

116

117

118

119

120

|

x_train = tokenizer.texts_to_matrix(X_train, mode="tfidf")

x_test = tokenizer.texts_to_matrix(X_test, mode="tfidf")

y_train = tokenizer2.texts_to_matrix(Y_train, mode="tfidf")

y_test = tokenizer2.texts_to_matrix(Y_test, mode="tfidf")

|

I use Keras Tokenizer to convert text into integer values. Tokenizer will assign a integer to the 10000 (input_words) most frequently used words.

116

117

118

119

120

121

122

|

# Create classes file

encoder = LabelBinarizer()

encoder.fit(Z_train)

text_labels = encoder.classes_

with open(os.path.join(myapp_config.OUTPUT_PATH, "classes.txt"), "w") as f:

for item in text_labels:

f.write("%s\n" % item)

|

116

117

118

119

|

z_train = encoder.transform(Z_train)

z_test = encoder.transform(Z_test)

num_classes = len(text_labels)

logging.info("Numbers of classes found: " + str(num_classes))

|

Model creation

The model is using ReLU as Activation Function in the Input layer, in the Output layer the softmax function is used as activation function, Softmax extends the logistic regression capabilities to multi-class problems assigining decimal probabiblities for each category. The model has two Inputs: Ticket short description and category. Categorial crossentropy is the loss function used for our multi-class classification problem, that measures the classification model performance. Adam opmitization is used as Optimizer.

99

100

101

102

103

|

# Model creation and summarization

batch_size = 100

input1 = Input(shape=(vocab_size1,), name="main_input")

x1 = Dense(512, activation="relu")(input1)

x1 = Dropout(0.5)(x1)

|

99

100

101

102

103

104

105

|

input2 = Input(shape=(vocab_size2,), name="cat_input")

main_output = Dense(num_classes, activation="softmax", name="main_output")(x1)

model = Model(inputs=[input1, input2], outputs=[main_output])

model.compile(

loss="categorical_crossentropy", optimizer="adam", metrics=["accuracy"]

)

model.summary()

|

Model evaluation and persistence

The model method ‘fit()’ is responsible for training the model for a fixed number of epochs (iterations on a dataset). We pass parameter as the training vectors X and Y and labels Z.

177

178

179

180

181

182

183

184

185

|

# Model Evaluation

history = model.fit(

[x_train, y_train],

z_train,

batch_size=batch_size,

epochs=10,

verbose=1,

validation_split=0.10,

)

|

177

178

179

180

181

182

|

score = model.evaluate(

[x_test, y_test], z_test, batch_size=batch_size, verbose=1

)

logging.info("Test accuracy:", str(score[1]))

self.accuracy = score[1]

|

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

|

# serialize model to JSON

model_json = model.to_json()

with open(

os.path.join(

myapp_config.OUTPUT_PATH, "model_" + myapp_config.MODEL_NAME + ".json"

),

"w",

) as json_file:

json_file.write(model_json)

# creates a HDF5 file 'my_model.h5'

model.save(

os.path.join(

myapp_config.OUTPUT_PATH, "model_" + myapp_config.MODEL_NAME + ".h5"

)

)

|

177

178

179

180

181

182

183

184

185

|

# Save Tokenizer i.e. Vocabulary

with open(

os.path.join(

myapp_config.OUTPUT_PATH,

"tokenizer" + myapp_config.MODEL_NAME + ".pickle",

),

"wb",

) as handle:

pickle.dump(tokenizer, handle, protocol=pickle.HIGHEST_PROTOCOL)

|

Dataset Prediction

200

201

202

203

204

205

206

207

|

# ShortDescriptions

x_pred = self._tokenizer.texts_to_matrix(short_description, mode="tfidf")

# Categorias

y_pred = self._tokenizer.texts_to_matrix(category, mode="tfidf")

model_predictions = self._model.predict(

{"main_input": x_pred, "cat_input": y_pred}

)

|

200

201

202

|

logging.info("Running Individual Ticket Prediction")

sorting = (-model_predictions).argsort()

sorted_ = sorting[0][:5]

|

200

201

202

203

204

205

206

207

208

209

210

|

for value in sorted_:

predicted_label = self.labels[value]

# just some rounding steps

prob = (model_predictions[0][value]) * 100

prob = "%.2f" % round(prob, 2)

top5_pred_probs.append([prob, predicted_label])

output = {

"short_description": short_description[0],

"category": category[0],

"top5_pred_probs": top5_pred_probs,

}

|

200

201

202

|

with open(

os.path.join(myapp_config.OUTPUT_PATH, "activity_predict_output.json"), "w"

) as fp:

|

SYNOPSIS

Creating the Model

1

2

3

4

5

6

|

from TicketClassifierModel import TicketClassifierModel

ticket_model = TicketClassifierModel(training_dataset='TicketTrainingData.csv',

testing_dataset='TicketTestingData.csv',

recreate_model=True)

ticket_model.evaluate_model(testing_dataset=testing_dataset)

|

Making Predictions

1

2

3

4

5

6

7

|

from ActivityClassify import TicketActivityPredict

classifier = TicketActivityPredict()

# Return top 5 prediction scores

prediction = classifier.predict_text(ShortDescription='Unlock of an Active Directory Admin or Server Account account or account',

Category='Account Update Account Administration')

print(prediction)

#{'short_description': 'Unlock of an Active Directory Admin or Server Account account or account', 'category': 'Account Update Account Administration', 'top5_pred_probs': [['87.09', 'AD User Isse'], ['12.90', 'Password reset'], ['0.00', 'Application Access'], ['0.00', 'Script Execution'], ['0.00', 'DB Connection']]})

|

LINKS

For more information about Keras Text classification I recommend the following links.

- https://realpython.com/python-keras-text-classification/

- https://keras.io/api/models/model_training_apis/

- https://keras.io/examples/structured_data/structured_data_classification_from_scratch/

- Feature 1 image source: https://semiengineering.com/deep-learning-spreads/